Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

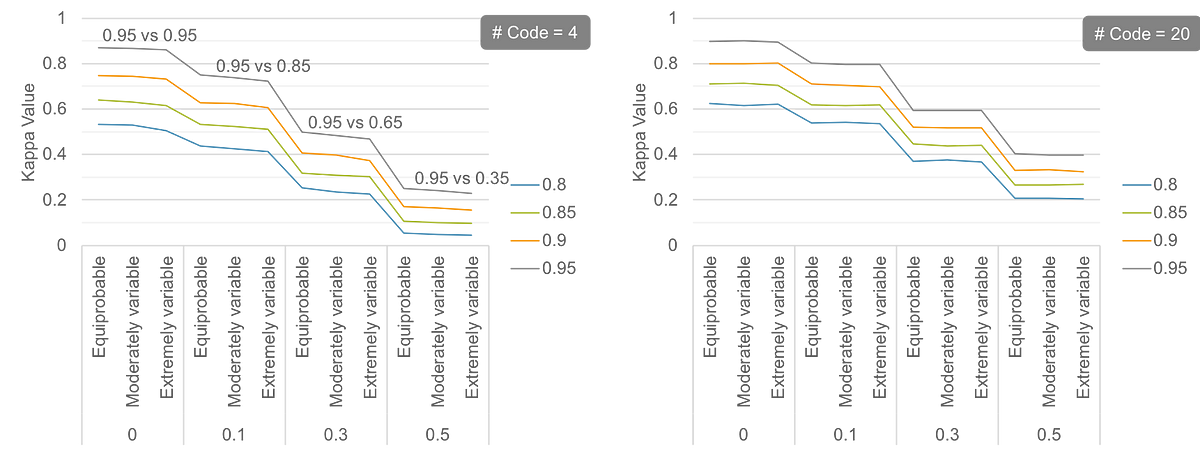

Table 1 from Generalized estimating equations with model selection for comparing dependent categorical agreement data | Semantic Scholar

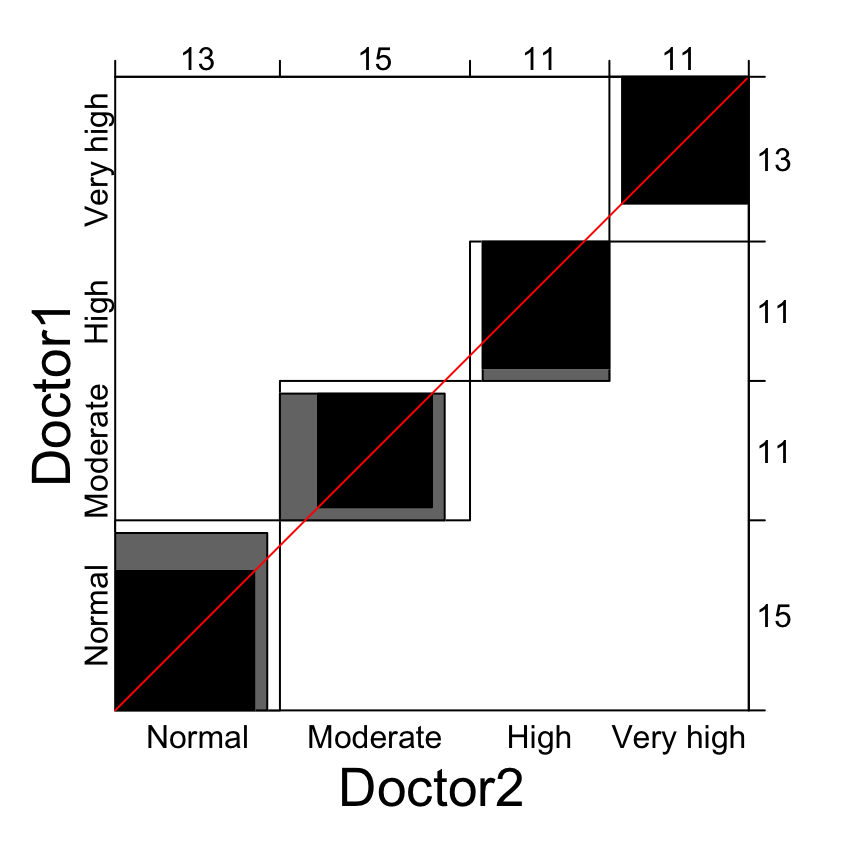

![Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh Statistics Part 15] Measuring agreement between assessment techniques: Intraclass correlation coefficient, Cohen's Kappa, R-squared value – Data Lab Bangladesh](https://datalabbd.com/wp-content/uploads/2019/06/15a-1.png)

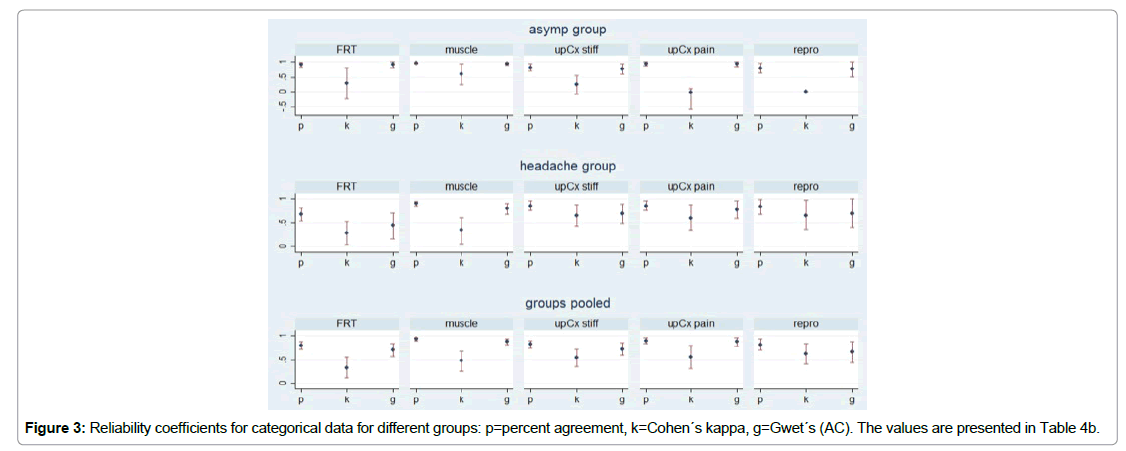

![2. Cohens Kappa [R] Two Pathologist Diagnose (inde... | Chegg.com 2. Cohens Kappa [R] Two Pathologist Diagnose (inde... | Chegg.com](https://media.cheggcdn.com/study/c7a/c7ae507f-4041-44ca-b57e-39704c37ba0d/image)